free software

Linux, open source software, tips and tricks.

Debian’s “dist-upgrade”

1There seems to be a common misconception about Debian’s package manager “apt”, that the command “dist-upgrade” is used to upgrade to a new release. It is, but it isn’t. I wanted to clarify that here.

Basically, there are 4 things that you might want to do as part of upgrading a system.

apt-get update– updates the list of available packages and versionsapt-get upgrade– upgrade packages that you already haveapt-get dist-upgrade– upgrade packages that you already have, PLUS install any new dependencies that have come up- edit the sources files – change the release that you are tracking

That means that to freshen up your packages to the latest versions on your current release, you should do “apt-get update && apt-get dist-upgrade“. On some systems that track “testing”, which changes often, I do this almost daily.

When you’re ready to “really upgrade” to a new release, you edit your sources files in /etc/apt/sources and change the release names. If the source lists contain proper release names, like “etch”, “lenny”, “squeeze” or “wheezy”; then you change these names to the new release that you want (see http://www.debian.org/releases/). If the source lists contain symbolic names like “stable”, “testing” and “unstable”, you do not need to change anything. When a new release is ready, the Debian people will change the symbols to point to the new release names. For example, right now, stable=squeeze and testing=wheezy.

Note 1 – “unstable” never points to a named release… it’s the pre-release proving ground for packages, used before are ready for inclusion in the testing release.

Note 2 – Don’t let these symbolic names fool you:

- “Stable” means “old, tried, tested, and rock solid”. It’s a very conservative choice.

- “Testing” does not mean “chaotic”. It is roughly the equivalent of Red Hat’s Fedora. It’s new stuff, and each package changes on its own schedule, but they usually play well together.

- “Unstable” is not nearly as unstable as the name implies. It’s like a beta release that may be updated daily.

After your source lists look OK, you do the same thing you’ve always done: “apt-get update && apt-get dist-upgrade“”apt-get update && apt-get dist-upgrade“.

If you’re running Ubuntu, the release names are at http://releases.ubuntu.com/. And they’ve made a nice wrapper script called “do-release-upgrade” that basically edits your source lists and does the dist-upgrade for you (it also does some other nice steps, like letting you review the changes).

So there it is… fear not the “dist-upgrade”. In fact, most of the time, it is what you’ll want to run. It will make sure that you have all of the dependencies that you need.

Host your own calendar server – iPhone client

0I am a bit of a do-it-yourselfer. When looking for a new service like an online photo gallery, my first instinct is to find an open source package where I can host photos on my own web server. I know that many will skip this step and go directly to Picasa.

These days, it seems to come down to those choices: do it yourself, or give another slice of your life to Google. In this post, we’re going to do it ourselves.

Ever since the days of the Palm Pilot, I have made heavy use of the calendar application. When I switched to the iphone in 2009, I decided that I wanted to use a online calendar that I could access from my laptop and from the iphone. I looked at the open source world, and I found a package called DavICal, by Andrew McMillan in New Zealand. It uses the CalDAV protocol to exchange event information with clients like the iphone’s calendar, iCal on the Mac, Mozilla Thunderbird with the Lightning plug-in, Mozilla Sunbird, and (I think) Outlook.

Setting up the server is pretty well documented on the DavICal web site. On a Debian-based server, it’s a simple “apt-get” operation (after you add their site to your sources list).

Below, I will talk about how my calendars are organized, and then I’ll walk through the steps of setting up a new user and calendars on the server, and then setting up the iphone to access these new calendars.

How I organize my calendars

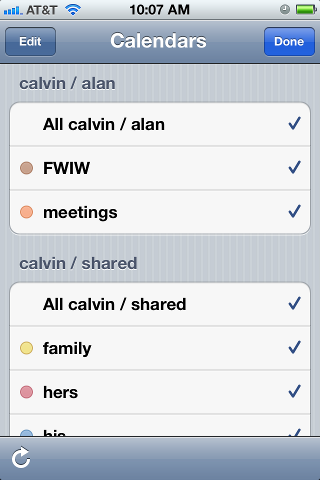

I organized my calendars in two groups, owned by users “alan” and “shared”. The “alan” user owns a set of calendars that I use, but that my family does not care about. The “shared” user is stuff that we all need to see. This might seem a little weird, but it makes things work out well on the iphone. Below is a picture of the iphone’s “calendars” screen, which shows this grouping. Notice the orange calendar called “meetings”. I have meetings at work all day, and my wife really doesn’t need to see them on her ipod, and she does not need to hear the reminders throughout the day. So the meetings calendar is owned by the “alan” user. On the other hand, we all need to know about family events (yellow), “his” stuff (blue) and “her” stuff (red). That stuff is owned by the “shared” user.

Below, when we set up the iphone calendar accounts, I will have two accounts (“alan” and “shared”), and my wife will only have one (“shared”). I am sure there are other ways to get the permissions to work out, but this is dead-simple, and it shows the division in the screen shot below.

In case you wondered, “calvin” is my server. And “FWIW” stands for “for what it’s worth”… stuff that I want to know about, but that I am not planning on attending. I want to know when Mardi Gras and Burning Man and DefCon are taking place, but I am not going to any of them.

On the server

We’ll start with the DavICal web interface, which is functional, but not very polished. You need a user account, and that user can own several calendars (called “collections”).

To create a user on the DavICal web interface, you need to log in as an administrator and use the menu to “create a principle” of type “person”. See? Precise, carefully worded, but not so user-friendly.

The new user can now add calendars by logging into the same web interface and selecting “User Functions” and “List My Details”. That screen shows a bunch of junk you don’t care about at the top. But at the bottom, there is a list of “collections”. That’s the CalDAV term for a calendar. Use the “Create Collection” button to make one or more calendars. Make sure to give them a “display name”. That’s the name that will show up in the iphone’s calendar list.

On the iphone

Once you have the calendars on the web system, the iphone part is easy. Go to “Settings”, “Mail, contacts, calendar”, and “add account” of type “other”. Select “CalDAV”. Enter four things:

Server=(the nostname of your CalDAV server) Username=(your new user's name) Password=(your password) Description=(some name)

When you hit “next”, it may pop up a box complaining about SSL certificates. In my case, I don’t have proper SSL certificates, so we’re going to just say “continue”. Setting up SSL on the server is a future blog post. So we’ll say “continue”. At this point, you wait… and wait… for the iphone to guess all of the rest of the settings it can think of.

It will finally finish, and you’ll have to again tell it “don’t use SSL”. But surprisingly, it should have figured out the rest of the settings.

SSL=off port=80 URL=http://USER@SERVER/caldav.php/USER

At that point, when you open the iphone’s calendar application, your server should show up in the calendar list, with all of the individual calendar names below it.

Misleading VNC Server error – fontPath

0I am working from home today, which involves a combination of working locally on my laptop, but also remotely accessing some resources at the office. My current tool of choice for accessing the PC on my desk is VNC.

A note for Windows users — a VNC server on Windows will share the desktop session that is on your monitor. The default VNC server on Linux does not do this. Instead, it creates a brand new session that is completely independent of what’s on the monitor. If a Linux user wants the same experience that Windows users are used to, he can run “x11vnc”, a VNC server that copies the contents of whatever’s on the monitor and shares that with the remote user.

So when I access my PC remotely, I shell in to my desktop PC and I start a VNC server. I can specify the virtual screen size and depth, which is useful if I want to make it large enough to fill most of my laptop screen, but still leave a margin for stuff like window decorations. The command I use looks like this:

vncserver :1 -depth 32 -geometry 1600x1000 -dpi 96

This morning, when I started my VNC session, it responded with some errors about my fonts. Huh?

Couldn't start Xtightvnc; trying default font path. Please set correct fontPath in the vncserver script. Couldn't start Xtightvnc process. 21/02/12 10:40:55 Xvnc version TightVNC-1.3.9 21/02/12 10:40:55 Copyright (C) 2000-2007 TightVNC Group 21/02/12 10:40:55 Copyright (C) 1999 AT&T Laboratories Cambridge 21/02/12 10:40:55 All Rights Reserved. 21/02/12 10:40:55 See http://www.tightvnc.com/ for information on TightVNC 21/02/12 10:40:55 Desktop name 'X' (chutney:2) 21/02/12 10:40:55 Protocol versions supported: 3.3, 3.7, 3.8, 3.7t, 3.8t 21/02/12 10:40:55 Listening for VNC connections on TCP port 5902 Fatal server error: Couldn't add screen 21/02/12 10:40:56 Xvnc version TightVNC-1.3.9 21/02/12 10:40:56 Copyright (C) 2000-2007 TightVNC Group 21/02/12 10:40:56 Copyright (C) 1999 AT&T Laboratories Cambridge 21/02/12 10:40:56 All Rights Reserved. 21/02/12 10:40:56 See http://www.tightvnc.com/ for information on TightVNC 21/02/12 10:40:56 Desktop name 'X' (chutney:2) 21/02/12 10:40:56 Protocol versions supported: 3.3, 3.7, 3.8, 3.7t, 3.8t 21/02/12 10:40:56 Listening for VNC connections on TCP port 5902 Fatal server error: Couldn't add screen

I scratched around for a while, looking at font settings. Then I tried some experiments. I changed the dimensions from 1600×1000 to 800×600. Suddenly it worked.

Then I noticed that the VNC startup script makes some assumptions. If the VNC server can not start, it assumes that the problem is with the fonts, and it tries again using the default font path. In my case, the VNC server failed because I had specified a large screen (1600x1000x32) and there was already another session on the physical monitor. VNC could not start because it had run out of video memory.

So my solution? I had a choice. I could reduce the size or depth of the virtual screen, or I could kill the existing session that was running on my desktop.

Don’t believe everything you read. That error about the fontPath is misleading. The rest of the error message tells what’s going on. It could not start Xvncserver.

Chrome and LVM snapshots

0This is crazy.

I was trying to make an LVM snapshot of my home directory, and I kept getting this error:

$ lvcreate -s /dev/vg1/home --name=h2 --size=20G /dev/mapper/vg1-h2: open failed: No such file or directory /dev/vg1/h2: not found: device not cleared Aborting. Failed to wipe snapshot exception store. Unable to deactivate open vg1-h2 (254:12) Unable to deactivate failed new LV. Manual intervention required.

I did some Googling around, and I saw a post from a guy at Red Hat that says this error had to do with “namespaces”. And by the way, Google Chrome uses namespaces, too. Followed by the strangest question…

I see:

“Uevent not generated! Calling udev_complete internally to avoid process lock-up.”

in the log. This looks like a problem we’ve discovered recently. See also:

http://www.redhat.com/archives/dm-devel/2011-August/msg00075.html

That happens when namespaces are in play. This was also found while using the Chrome web browser (which makes use of the namespaces for sandboxing).

Do you use Chrome web browser?

Peter

This makes no sense to me. I am trying to make a disk partition, and my browser is interfering with it?

Sure enough, I exited chrome, and the snapshot worked.

$ lvcreate -s /dev/vg1/home --name=h2 --size=20G Logical volume "h2" created

This is just so weird, I figured I should share it here. Maybe it’ll help if someone else runs across this bug.

Suspend & Hibernate on Dell XPS 15

0In November, I got a new laptop, a Dell XPS 15 (also known as model L501X). It’s a pretty nice laptop, with a very crisp display and some serious horsepower.

I first installed Ubuntu 10.10 on it, and almost everything worked out of the box. But it would not suspend (save to RAM) or hibernate (save to disk) properly. I quickly found a solution to this problem on the internet. It had to do with two drivers that need to be unloaded before suspending/hibernating: the USB3 driver and the Intel “Centrino Advanced-N 6200” wireless a/b/g/n driver.

The solution is to create two files, shown below, that will unload the driver before suspending and reload the driver when it starts back up.

In /etc/pm/config.d/custom-L501X:

# see http://ubuntuforums.org/showthread.php?t=1634301 SUSPEND_MODULES="xhci-hcd iwlagn"

In /etc/pm/sleep.d/20_custom-L501X:

#!/bin/sh

# see http://ubuntuforums.org/showthread.php?t=1634301

case "${1}" in

hibernate|suspend)

echo -n "0000:04:00.0" | tee /sys/bus/pci/drivers/iwlagn/unbind

echo -n "0000:05:00.0" | tee /sys/bus/pci/drivers/xhci_hcd/unbind

;;

resume|thaw)

echo -n "0000:04:00.0" | tee /sys/bus/pci/drivers/iwlagn/bind

echo -n "0000:05:00.0" | tee /sys/bus/pci/drivers/xhci_hcd/bind

;;

esac

A little while later, I “upgraded” to Ubuntu 11.04, but then suspend/hibernate stopped working. Argh!! I tried a lot of work-arounds, but nothing worked.

What’s particularly frustrating about this sort of problem is that you end up finding a lot of posts on forums where some guy posts a very terse statement like “I put ‘foo’ in my /etc/bar and it worked”. So you end up stabbing a lot of configuration settings into a lot of files without actually understanding how any of it works.

From what I can piece together, it looks like the Linux suspend/hibernate process has been handled by several different subsystems over time. These days, it is handled by the “pm-utils” package and the kernel. PM utils figures out what to do, where to save the disk image (your swap partition), and then it tells the kernel to do the work. There are a lot of other packages that are not used any more: uswsusp, s2disk/s2ram, and others (please correct me if I am wrong).

I never really liked what I saw in Ubuntu 11.04, and so I tried a completely new distro, Linux Mint Debian Edition. This distro combines the freshness of the Debian “testing” software repository with the nice desktop integration of Linux Mint. Admittedly, it may be a little too bleeding edge for some, it’s certainly not for everybody. But so far, I like it.

When I first installed LMDE, suspend and hibernate both worked. But then I updated to a newer kernel and it stopped working. So, just like with Ubuntu, something in the more recent kernel changed and that broke suspend/hibernate.

I tried a few experiments. If I left the two custom config files alone, the system would hang while trying to save to RAM or to disk. If I totally removed my custom config files, I could suspend (to RAM), and I could hibernate (to disk), but I could not wake up from hibernating (called “thawing”). I determined through lots of experiments that the USB3 driver no longer needed to be unloaded, but the wireless driver does need to be unloaded.

So with the newer 2.6.39 kernel, the config files change to this, with the xhci_hcd lines removed.

In /etc/pm/config.d/custom-L501X:

SUSPEND_MODULES="iwlagn"

In /etc/pm/sleep.d/20_custom-L501X:

#!/bin/sh

case "${1}" in

hibernate|suspend)

echo -n "0000:04:00.0" | tee /sys/bus/pci/drivers/iwlagn/unbind

;;

resume|thaw)

echo -n "0000:04:00.0" | tee /sys/bus/pci/drivers/iwlagn/bind

;;

esac

At the risk of just saying “I put ‘foo’ in /etc/bar and it worked”, that’s what I did… and it did.

If you have insights on how the overall suspend/hibernate process works, please share. There seems to be a lot of discussion online about particular configuration files and options, but I could not find much info about the overall architecture and the roadmap.

South East Linux Fest

0I enjoyed a “Geekin’ Weekend” at South East Linux Fest in Spartanburg SC.

Four of us from TriLUG (Kevin Otte, Jeff Shornick, Bill Farrow and myself) packed into the minivan and made a road trip down to South Carolina on Friday. We got there in time to see some of the exhibits and a few of the Friday afternoon sessions. There was some light mingling in the evening, and then we all wrapped it up to prepare for the big day ahead.

On Saturday, we had breakfast with Jon “Maddog” Hall before he gave the keynote on how using open source can help create jobs. The day was filled with educational sessions. I attended ones on SELinux, Remote Access and Policy, FreeNAS, Arduino hacking, and Open Source in the greater-than-software world. We wrapped it up with a talk on the many ways that projects can FAIL (from a distro package maintainer’s view). But the night was not over… we partied hard in the hotel lounge, rockin’ to the beats of nerdcore rapper “Dual Core”, and then trying to spend the complimentary drink tickets faster than sponsor Rackspace could purchase them.

Sunday was much slower paced, as many had left for home and many others were sleeping off the funk of the previous night’s party. But if you knew where to be, there were door prizes to be scored. I ended up with a book on Embedded Linux.

It was a memorable weekend, for sure. We learned a lot of new tech tricks, and we enjoyed hanging out with the geeks.

Encrypting your entire hard disk (almost)

0I have a small netbook that I use when I travel, one of the original Asus EeePC’s, the 900. It has a 9″ screen and a 16GB flash drive. It runs Linux, and it’s just about right for accessing email, some light surfing, and doing small tasks like writing blog posts and messing with my checkbook. And since it runs Linux, I can do a lot of nice network stuff with it, like SSH tunneling, VPN’s, and I can even make it act like a wireless access point.

However, the idea of leaving my little PC in a hotel room while I am out having fun leaves me a little uneasy. I am not concerned with the hardware… it’s not worth much. But I am concerned about my files, and the temporary files like browser cookies and cache. I’d hate for someone to walk away with my EeePC and also gain access to

countless other things with it.

So this week, I decided to encrypt the main flash drive. Before, the entire flash device was allocated as one device:

partition 1 – 16GB – the whole enhilada

Here’s how I made my conversion.

(0) What you will need:

- a 1GB or larger USB stick (to boot off of)

- an SD card or USB drive big enough to back up your root partition

(1) Boot the system using a “live USB stick” (you can create one in Ubuntu by going to “System / Administration / Startup Disk Creator”. Open up a terminal and do “sudo -i” to become root.

ubuntu@ubuntu:~$ sudo -i root@ubuntu:~$ cd / root@ubuntu:/$

(2) Install some tools that you’ll need… they will be installed in the Live USB session in RAM, not on your computer. We’ll install them on your computer later.

root@ubuntu:/$ apt-get install cryptsetup

(3) Insert an SD card and format it. I formatted the entire card. Sometimes, you might want to make partitions on it and format one partition.

root@ubuntu:/$ mkfs.ext4 /dev/sdb root@ubuntu:/$ mkdir /mnt/sd root@ubuntu:/$ mount /dev/sdb /mnt/sd root@ubuntu:/$

(4) Back up the main disk onto the SD card. The “numeric-owner” option causes the actual owner and group numbers to be stored in the tar file, rather than trying to match the owner/group names to the names from /etc/passwd and /etc/group (remember, we booted from a live USB stick).

root@ubuntu:/$ tar --one-file-system --numeric-owner -zcf /mnt/sd/all.tar.gz . root@ubuntu:/$

(5) Re-partition the main disk. I chose 128MB for /boot. The rest of the disk will be encrypted. The new layout looks like this:

partition 1 – 128MB – /boot, must remain unencrypted

partition 2 – 15.8GB – everything else, encrypted

root@ubuntu:/$ fdisk -l Disk /dev/sda: 16.1 GB, 16139354112 bytes 255 heads, 63 sectors/track, 1962 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Sector size (logical/physical): 512 bytes / 512 bytes I/O size (minimum/optimal): 512 bytes / 512 bytes Disk identifier: 0x0002d507 Device Boot Start End Blocks Id System /dev/sda1 * 1 17 136521 83 Linux /dev/sda2 18 1962 15623212+ 83 Linux root@ubuntu:/$

(6) Make new filesystems on the newly-partitioned disk.

root@ubuntu:/$ mkfs.ext4 /dev/sda1 root@ubuntu:/$ mkfs.ext4 /dev/sda2 root@ubuntu:/$

(7) Restore /boot to sda1. It will be restored into a “boot” subdirectory, because that’s the way it was on the original disk. But since this is a stand-alone /boot partition, we need to move the files to that filesystem’s root.

root@ubuntu:/$ mkdir /mnt/sda1 root@ubuntu:/$ mount /dev/sda1 /mnt/sda1 root@ubuntu:/$ cd /mnt/sda1 root@ubuntu:/mnt/sda1$ tar --numeric-owner -zxf /mnt/sd/all.tar.gz ./boot root@ubuntu:/mnt/sda1$ mv boot/* . root@ubuntu:/mnt/sda1$ rmdir boot root@ubuntu:/mnt/sda1$ cd / root@ubuntu:/$ umount /mnt/sda1 root@ubuntu:/$

(8) Make an encrypted filesystem on sda2. We will need a label, so I will call it “cryptoroot”. You can choose anything here.

root@ubuntu:/$ cryptsetup luksFormat /dev/sda2 WARNING! ======== This will overwrite data on /dev/sda2 irrevocably. Are you sure? (Type uppercase yes): YES Enter LUKS passphrase: ******** Verify passphrase: ******** root@ubuntu:/$ cryptsetup luksOpen /dev/sda2 cryptoroot root@ubuntu:/$ mkfs.ext4 /dev/mapper/cryptoroot root@ubuntu:/$

(9) Restore the rest of the saved files to the encrypted filesystem that lives on sda2. We can remove the extra files in /boot, since that will become the mount point for sda1. We need to leave the empty /boot directory in place, though.

root@ubuntu:/$ mkdir /mnt/sda2 root@ubuntu:/$ mount /dev/mapper/cryptoroot /mnt/sda2 root@ubuntu:/$ cd /mnt/sda2 root@ubuntu:/mnt/sda2$ tar --numeric-owner -zxf /mnt/sd/all.tar.gz root@ubuntu:/mnt/sda2$ rm -rf boot/* root@ubuntu:/mnt/sda2$ cd / root@ubuntu:/$

(10) Determine the UUID’s of the sda2 device and the encrypted filesystem that sits on top of sda2.

root@ubuntu:/$ blkid /dev/sda1: UUID="285c9798-1067-4f7f-bab0-4743b68d9f04" TYPE="ext4" /dev/sda2: UUID="ddd60502-87f0-43c5-aa28-c911c35f9278" TYPE="crypto_LUKS" << [UUID-LUKS] /dev/mapper/root: UUID="a613df67-3179-441c-8ce5-a286c16aa053" TYPE="ext4" << [UUID-ROOT] /dev/sdb: UUID="41745452-3f89-44f9-b547-aca5a5306162" TYPE="ext3" root@ubuntu:/$

Notice that you’ll also see sda1 (/boot) and sdb (the SD card) as well as some others, like USB stick. Below, I will refer to the actual UUID’s that we read here as [UUID-LUKS] and [UUID-ROOT].

(11) Do a “chroot” inside the target system. A chroot basically uses the kernel from the Live USB stick, but the filesystem from the main disk. Notice that when you do this, the prompt changes to what you usually see when you boot that system.

root@ubuntu:/$ mount /dev/sda1 /mnt/sda2/boot root@ubuntu:/$ mount --bind /proc /mnt/sda2/proc root@ubuntu:/$ mount --bind /dev /mnt/sda2/dev root@ubuntu:/$ mount --bind /dev/pts /mnt/sda2/dev/pts root@ubuntu:/$ mount --bind /sys /mnt/sda2/sys root@ubuntu:/$ chroot /mnt/sda2 root@enigma:/$

(12) Install cryptsetup on the target.

root@enigma:/$ apt-get install cryptsetup root@enigma:/$

(13) Change some of the config files on the encrypted drive’s /etc so it will know where to find the new root filesystem.

root@enigma:/$ cat /etc/crypttab cryptoroot UUID=[UUID-LUKS] none luks root@enigma:/$ cat /etc/fstab proc /proc proc nodev,noexec,nosuid 0 0 # / was on /dev/sda1 during installation # UUID=[OLD-UUID-OF-SDA1] / ext4 errors=remount-ro 0 1 UUID=[UUID-ROOT] / ext4 errors=remount-ro 0 1 /dev/sda1 /boot ext4 defaults 0 0 # RAM disks tmpfs /tmp tmpfs defaults 0 0 tmpfs /var/tmp tmpfs defaults 0 0 tmpfs /var/log tmpfs defaults 0 0 tmpfs /dev/shm tmpfs defaults 0 0 root@enigma:/$

(14) Rebuild the GRUB bootloader, since the files have moved from sda1:/boot to sda1:/ .

root@enigma:/$ update-grub root@enigma:/$ grub-install /dev/sda root@enigma:/$

(15) Update the initial RAM disk so it will know to prompt for the LUKS passphrase so it can mount the new encrypted root filesystem.

root@enigma:/$ update-initramfs -u -v root@enigma:/$

(16) Reboot.

root@enigma:/$ exit root@ubuntu:/$ umount /mnt/sda2/sys root@ubuntu:/$ umount /mnt/sda2/dev/pts root@ubuntu:/$ umount /mnt/sda2/dev root@ubuntu:/$ umount /mnt/sda2/proc root@ubuntu:/$ umount /mnt/sda2/boot root@ubuntu:/$ umount /mnt/sda2 root@ubuntu:/$ reboot

When it has shut down the Live USB system, you can remove the USB stick and let it boot the system normally. If all went well, you will be prompted for the LUKS passphrase a few seconds into the bootup process.

A Tech Trifecta

2Dropbox + KeePass + VeraCrypt

I would like to introduce three software packages that are profoundly useful in their own rights, but then go a step further and show how these three tools can be used together to revolutionize how you keep track of your secrets & finances in the digital age.

The first tool is Dropbox, a cloud service where you can store a folder of your own personal files. They offer a free service where you can keep 2GB of files, and you can pay for more storage.

You can access the files from anywhere using a web interface. Or better, you can install the Dropbox program, and it will automatically keep a local copy of all of your stuff up to date in a folder on your computer (Windows, Mac or Linux). If you’re offline for a bit, no problem, because you still have your local copy of the files.

The real magic happens when you connect multiple computers to the same Dropbox account — say, your home PC and your office PC. Dropbox keeps the files in sync for you.

There is even a Dropbox app for your iPhone, so you can access your files on the go.

If I asked you how many passwords you have, you might think that you have a dozen or so. One day, I decided to make a list of every password I had (including multiple accounts where I happened to use the same password). I was shocked to find that I had more than 100.

PRO TIP – Most people use the same five passwords over and over… they don’t bother remembering which ones they used for a particular web site… they just try all five of their “not so important” passwords. That means that if I want to steal something from you, I can just set up a service and invite you to log in. I make your next login attempt fail, so you’ll get confused and enter all five of your “not so important” passwords, and possibly your “good” ones, too. Then I can go to eBay and PayPal and Amazon with your username and all of your passwords, and I have a pretty good chance of logging into your account.

For this reason, you should use a different password at EVERY web site.

KeePass is an open source program that stores your passwords. The program stores all of that information in a single data file that you lock using a single “master password”. This should be the only password you ever remember. The rest of them should be long strings of gibberish characters.

Keepass has a nice “generate” feature which will come up with really good passwords like “an@aiph5Ph”.

KeePass is available for Windows, Mac and Linux (KeePassX), and there are a couple of iPhone variants (I recommend “MyKeePass”).

Keepass stores four main fields: the browser URL address, user name, password, and notes. That means that I can store an entry like this for my bank:

URL = https://www.FirstFederalLovesYou.com/personal/login

name = alan_porter

password = uG7pi~ji9a

notes = security question, pet’s name = teabag

These days, it’s really easy to get confused about the main web address that you’re supposed to use to log in to your bank. They make things confusing by using fancy URL’s like “FirstFederalLovesYou.com” instead of “FirstFederal.com”. If you have not had your coffee one morning and you type “FirstFederalLovesMe.com” by mistake (“Me” instead of “You”), you’ll find yourself logging into a phishing site, which is trying to steal your password.

With keepass, there is no room for this sort of error. You double click on the URL and a browser opens on that site. You double click on the user name and it copies the user name to the clipboard. Paste it in the browser. Similarly, double click on the password and paste it in the browser. If your bank uses “security questions”, you can store your answers in the notes section.

PRO TIP – Don’t use truthful answers for “security” questions… treat these as extended passwords, just like you’d see in the old spy movies (he says “the sky looks like rain”, and you answer “that helps the bananas grow”). The answers do not even need to make sense… they just need to be remembered… in your head (bad) or in your KeePass file (good).

And no, I have never had a pet named “teabag”.

The last tool I would like to talk about is VeraCrypt*, a free package that allows you to create an encrypted volume on a file or a disk.

NOTE: the original version of this blog post recommended TrueCrypt, which has been discontinued — VeraCrypt is a drop-in replacement with several improvements, most notably it is open source and it has undergone very close scrutiny and security audits.

You can create a VeraCrypt file with a name like “mysecrets.vc” that you store on your desktop. You give it a size and a password. Then when you open that file, you’ll have to enter the password, and then it will appear as a Windows drive letter. On a Mac or Linux, it will show up as folder in /Volumes or in /media. When you close it, the drive letter is gone, and your secrets are stored, encrypted, in the “mysecrets.vc” file.

You can store this “mysecrets.vc” file anywhere you want… on your desktop, on a USB flash drive, or even on your Dropbox account.

You might also want to use VeraCrypt to encrypt an entire device, like a USB flash drive or SD card — nice.

THE TRIFECTA

Using these three tools together, suddenly we have a very secure way to do our online banking.

Regardless of what computer you’re using — Mac, Windows, Linux, desktop, laptop, at home, at work, whatever — you now have access to your passwords, and all of the other files that you need: Quicken or GnuCash, the PDF files of your bills, personal files, etc.

EXAMPLE 1 – PAYING BILLS FROM A WINDOWS PC

A typical online bill-paying session might look like this:

- Look in your Dropbox for your KeePass password file, say D:\mypasswords.kdb. Open it with KeePass.

- Look in your Dropbox for your VeraCrypt volume, say D:\mybills.vc. Open it with VeraCrypt. It will be mounted as drive V.

- Use your normal accounting package (Quicken or Gnucash) to open your checkbook, say V:\checkbook.qdf or V:\checkbook.gc.

- Find your bank password in KeePass. Click on the safe URL, copy the username and password from KeePass to the browser.

- When you need to save a statement or a bill, save into the VeraCrypt volume on the V drive, like in V:\statements.

EXAMPLE 2 – LOOKING UP A PASSWORD FROM THE IPHONE

Say you’re out and about, and you want to use your iphone to log into a web site that you don’t know the password for (of course you don’t… because you use GOOD and UNIQUE passwords like “taeYi#c6We”, right?).

- First, you use the iPhone Dropbox app to locate your KeePass file. Click on the filename, and the app will say “I don’t know what kind of file this is”. But it will show you a little “chain link” icon in the bottom. Click this icon to get a web URL for that one file. The URL will be copied to your clipboard.

- Next, run MyKeePass and click “+” to add a new KeePass file. Select “download from WWW” and paste the URL that you just got from Dropbox.

Now, you can open your KeePass file from anywhere! You’ll have to enter the file’s master password that you set up earlier. After that, you’ll be able to browse the information in the KeePass file.

Note that you can only READ the file using the “download from WWW” method. If you need to save a new password or some other information, you’ll need to write it down on a piece of paper until you are back at your PC (no biggie — that’s pretty rare).

A NOTE ABOUT SECURITY

Some readers might note that we just shared our KeePass password file with the entire world, when we told Dropbox to give us a URL. Someone else could use that same (random-ish) URL to download our KeePass file. Since this file is encrypted using the master password, it will look like gibberish to anyone but you. For this reason, we should make sure that we use a VERY STRONG password for the KeePass file… not your dog’s name (remember, mine is “teabag”).

Using SSH to tunnel web traffic

1Every once in a while, I learn something new and magical that SSH can do. For a while now, I have been using its “dynamic port forwarding” capability, and I wanted to share that here.

Dynamic port forwarding is a way to create a SOCKS proxy that listens on your local machine, and forwards all traffic through the SSH tunnel to the remote side, where it will be passed along to the remote network.

What this means is that you can tell your web browser to send some – or all – of your web requests through the tunnel, and the SSH daemon on the other side will send those requests out to the internet for you, and then it will send the responses back through the tunnel to your browser.

There are several practical uses for SSH tunneling. Here are a few real-world examples of where I have used tunnels:

- You may be behind a firewall that blocks web sites that you want to visit. I was at a company that had a web proxy that blocked some “hacking” web sites, when I really needed to look up some information on computer vulnerabilities, to make sure that their systems were properly patched. Using the SSH tunnel bypassed that company’s web proxy.

- You may not trust a hotel’s internet service. In fact, you SHOULD NOT trust a hotel’s internet service. They keep records of sites that you visit, ostensibly to keep a customer profile of your interests. Some also do deep packet inspection to observe words in the web pages and emails that you read. Intrusive marketing!

- You may want to work from home, and access work lab resources from home. If you can SSH into one machine at work, then you can access the web resources inside that network.

- Working on a cluster of machines, where only one of them is directly accessible from your desk. It stinks to have to do your work in a noisy lab. Why not shell into the gateway machine and tunnel your web traffic through that SSH connection?

- You may want to access a web interface to something at home, like a printer… or an oven. You should open the SSH service to your router, and then you can SSH in and easily access web interfaces to everything in your home.

- You may want a web interface on some service on an internet-facing machine, but you don’t want to expose it to everyone. Maybe you want phpMyAdmin on a server, but you don’t want everyone to see it. So run the web service on the local (127.0.0.1) network only, and use an SSH tunnel to get to it from wherever you are.

- Your ISP throttles web traffic from a particular site. In my case, it was an overseas movie streaming site, and my ISP would limit my traffic to 300kbps for 30 seconds, followed by nothing for 30 seconds (they do this to discourage you from watching movies over the internet). Sending the traffic through an SSH tunnel means the ISP only sees an encrypted stream of something on port 22 — they have no idea that the content is actually a movie streamed from China on port 80.

- You may live in India, but want to watch a show on Hulu (which only serves the US market). By tunneling through a US-based server, it will appear to Hulu that you’re in the US.

- You may be visiting China, and you want to bypass the Great Firewall of China. Believe it or not, my humble domain was not accessible directly from Shanghai.

- You may get a “better” route by going through a proxy. This is speculation on my part… but I did this once when I was in a free-for-all online ticket purchase. I wondered if I might get a faster route from my remote data center than I would get directly from my ISP.

So, how do you create a tunnel? It’s super easy.

Create an SSH session with a tunnel.

On Linux or a Mac, open a shell and type “ssh -D 10000 username@remotehost.com“.

On Windows*, you can run Putty (an SSH client). In the “connection / SSH / tunnels” menu, click on “dynamic”, then enter source port = 10000, then click “add”. You’ll see a “D10000” in the box that lists your forwarded ports. (* Putty is also available for Linux.)

That’s it… you’re done.

Tell the browser to use the tunnel.

The easiest way to get started with SOCKS proxies is to set it in the “preferences / advanced / network / connection settings” menu in Firefox (or the “preferences / under the hood / change proxy settings” menu in Google Chrome). In both cases, you want to set “manual proxy configuration” and SOCKS host = “localhost” and SOCKS port = 10000. If there is a selection for SOCKS v5, then check it.

Now, all web requests will go to a proxy that is running on your local machine on port 10000. If you do a “netstat -plnt | grep 10000”, you’ll see that your SSH or Putty session is listening on port 10000. SSH then forwards the web request to the remote machine, where SSHD will send it on out to the internet. Web responses will come back along that same path.

Anyone on your local network will only be able to see one connection coming from your machine: an encrypted SSH session to the remote. They will not see the web requests or responses. This makes it a great tool for using on public networks, like coffee shops or libraries.

Test it

Point your browser to a site that tells you your IP address, such as whatismyip.com. It should report the IP address of the remote server that you are connecting through, and not your local IP address.

Advanced – quickly selecting proxies using FoxyProxy or Proxy Switchy!

It can be a hassle to go into the preferences menu to turn the proxy on and off. So there are browser plug-ins that allow you to quickly switch between a direct connection and using a SOCKS proxy.

For Firefox, there is the excellent “FoxyProxy“. For Chrome, you’ll want “Proxy Switchy!“. Both plug-in’s allow you to select a proxy using a button on the main browser window.

But there’s more… they also allow you build a list of rules to automatically switch based on the URL that you are browsing. For example, at work, I set up a rule to route all web requests for the lab network through my SSH session that goes to the lab gateway machine.

It works like this. First, I set up a tunnel to the lab gateway machine:

- ssh -D 10001 alan@192.168.100.1 (lab gateway)

Then I set up the following FoxyProxy rules:

- 192.168.100.* – SOCKS localhost:10001 (SSH to lab)

- anything else – direct

Now, I can get to the lab machines using the lab proxy, and any other site that I access uses the normal corporate network.

Closing thoughts

If you like Putty or FoxyProxy or Proxy Switchy!, please consider donating to the projects to support and encourage the developers.

How to resize a VirtualBox disk

1I primarily run Linux, but occasionally need to run something in Windows. So I use the open source VirtualBox program to run Windows XP in a box (where it belongs).

Most of the time, I create a new VM for a specific task. For example, around tax time, I might create a VM that has nothing in it except WIndows XP and Turbo Tax or Tax Cut — or better yet — Tax Act. To make this a little easier, I create a VM that has a fresh copy of Windows XP on a 10GB hard disk, and then I made a compressed copy of the disk image file. It compresses nicely down to 700MB, just about right to fit on a CD. So step one of doing my taxes is to copy this disk image file, uncompress it, and create a new VM that uses that disk.

That is, I treat the Windows OS as disposable — create a VM, use it, save my files somewhere else and then throw the VM away.

Every once in a while, though, I need more than the 10GB that I originally allocated to the VM. For example, iTunes usually needs a lot more storage than that. So I need to resize the Windows C: drive. It turns out that this is very easy to do with the open source tool “gparted“. This comes included in Ubuntu Live CD’s, so I just boot the VM into an Ubuntu Live CD session and prepare the new, larger disk.

Here’s the step-by-step, gleefully stolen from the VirtualBox support forums.

- Make sure you have an Ubuntu Live CD, or a “System Rescue D“.

- Create a new hard disk image (*.vdi file) using Virtual Disk Manager. In VirtualBox, go to File / Virtual Disk Manager.

- Set your current VM to use the new disk image as it’s second hard disk and the Ubuntu Live CD (or System Rescue CD) ISO file as it’s CDROM device.

- Boot the VM from the CDROM.

- If you’re using the System Rescue CD, start a graphical “X-windows” session by typing

startxat the command prompt. - When your X-windows starts up, open up a terminal and type

gparted. - You’ll need to create a partition on the new disk. So in gparted, select the new disk and the Device / Create Partition Table.

- Then select the windows partition and choose copy.

- Select the second hard disk, right click on the representation of the disk and click paste.

- Gparted will prompt you for the size of the disk, drag the slider to the max size.

- Click apply and wait…

- Important – when it is done, right click on the disk and choose Manage Flags, and select Boot.

- Exit gparted and power off the VM.

- Change the VM settings to only have one disk (the new bigger disk) and un-select the ISO as the CDROM.

- Boot the VM into your windows install on it’s new bigger disk! The first time it boots up, Windows may do a disk check and reboot.

Once you’re happy with the new larger disk, you might want to delete the old, smaller one.

This method should work the same, regardless of whether the host OS is Linux, Windows or Mac OS.

Keep that Ubuntu Live CD around — it really comes in handy!